Why Facebook was Down? Hidden Reality of Internet Monopoly & Solution

In the past few days, of September 2021, Facebook, Whatsapp, and Instagram were offline for hours, why? Because there was another whistle-blower from Facebook who revealed thousands of internal documents that prove that Facebook doesn’t care about the social harm that its platform’s misinformation does and instead, they ignore the danger for profits.

The whistle-blower is Frances Haugen, who in her interview with 60-minutes, goes in-depth about how she had been working as a data scientist at Facebook to fight misinformation on Facebook from 2019 and how Facebook is intentionally turning off safety measures after the US election so that the platform can profit better, rather than stop hate speech and violence.

The documents she revealed goes to show that Facebook is one of the best companies in the world that is capable of detecting and stopping misinformation with algorithms and human fact-checkers, but in spite of this capability, it chooses to allow content that makes people angrier, because when there is an emotional response from the user, they tend to return to the platform more and spend more time there, hence leading to better profits for Facebook.

A brief look at past Facebook scandals

Back during the 2016 US election of Trump, an analytics company named Cambridge Analytica acquired Facebook user data and used it to target political ads for the Trump campaign, which was very biased, because the ads were targeted in such a way, as to invoke an emotional response and convert the citizen, based on the political views they hold and by feeding them misinformation and lies. This led to a massive outcry by the people, including senate testimonials from CEO Mark Zuckerberg himself.

How Facebook uses machine learning algorithms

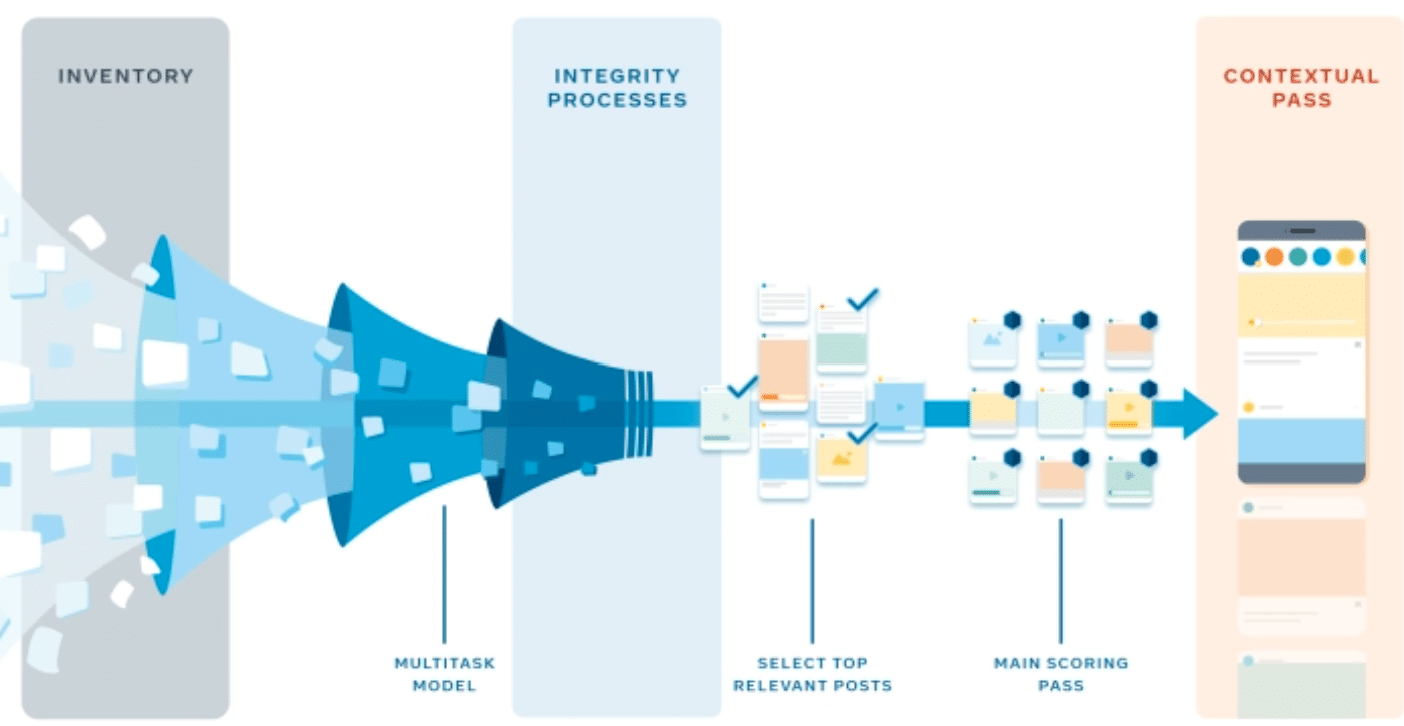

Facebook is the largest social media in the world, with the greatest number of active users, and so, every day, it produces billions of new posts, images, videos, and messages on its platform. Now, in order to process these posts, it needs automated algorithms that can analyze the content and serve it to the right people. So, for example, if your favorite movie star just released a new movie, Facebook will find those posts and serve them to your newsfeed. And the reason why Facebook is so good at serving the right content is that it uses the best machine learning algorithms out there to analyze the posts and user content that is coming in every second.

One such sub-field of machine learning is natural language processing, which uses algorithms to understand the text that people write and tries to extract meaning from it. This helps the Facebook algorithms to understand the content of the posts and then show it to the relevant people. Read more about NLP research at Facebook AI Labs.

What is natural language processing (NLP)?

Natural language processing (NLP) is a field of computer science in which, the text written by human beings in different languages is processed by algorithms to find the meaning and content from that text. For example, a common task is to find the topic of a post e.g. is this a post about sports or a news article about finance, or is it the personal blog post of a traveler?

In fact, NLP-based topic classification algorithms are used by Amazon’s Kindle to recommend new books to you, based on what previous topics you liked. Similarly, another popular task is sentiment analysis, which extracts the emotions from a piece of writing, e.g. is this tweet angry or is it praising another person? What is the user feeling in this review about restaurant food on Google Maps? Sentiment analysis is regularly used by companies to understand the customer’s reaction to their products and is an integral part of online companies now.

There are also other tasks like natural language generation, which is a fun way of inputting the first few words of a sentence, and then the algorithm will continue writing for you. In fact, this is how chatbots work. And the machine learning-based NLP algorithms that are best nowadays are the deep learning algorithms, such as BERT and GPT.

How do deep learning algorithms work?

The way these deep learning algorithms work is, you must label the dataset that the algorithm will train on, for example, in topic classification, each text in the dataset must be labeled with the topic, for example, news about elections, financial news, and so on.

Then the deep learning algorithm learns from millions of labeled examples and afterward, it can accurately tell the topic of texts it has never seen before. This is formally called supervised learning. The same goes for sentiment analysis, the dataset must be labeled with tags like positive/negative, happy/sad, angry, and so on. And then the sentiment analysis algorithm will be able to judge the emotions in never-before-seen posts.

How Facebook’s news feed algorithm works

Facebook’s newsfeed algorithm promises to show the most relevant posts for each individual user, based on what they like. The algorithm learns the likes, dislikes, and preferences of the user from the previous posts and interactions they have, and then it ranks the posts and prioritizes the ones that best match the known topics from that user.

In fact, Facebook is probably using your data from Instagram and Whatsapp too, to serve your posts and ads. Facebook has fine-tuned its news feed algorithms for over a decade now, from the trillions of posts from billions of people on its platform. In fact, it also has systems that can censor any topics that the Facebook executives and moderators want to censor, e.g. hate speech and misinformation about certain people.

Why Facebook’s algorithms are serving anger inciting posts

The latest facebook files reveal many immoral practices and policies that Facebook has been doing, including manipulating its algorithms to present anger-provoking content to its users, because research shows that users are more likely to read and engage with emotionally arousing content than other content.

And so, the Facebook news feed and ad serving algorithm are biased towards serving content that is inciting an emotional response from the users, because then, those users are spending more time on Facebook, and they are clicking on more ads as a result, and hence Facebook is earning more. This is outrageous since this policy has led to political conflicts and violence in the real world, all of which originated and grew on Facebook.

How Facebook’s algorithms are ignoring social harm for profits

And the whistle-blower Frances Haugen reveals thousands of documents to prove that the Facebook executives are intentionally turning off the safety filters against violent and dangerous content, in exchange for greater user engagement, and to maximize profits, rather than to protect its users against hateful content and misinformation, which can lead to severe political discord and cause harm to societies all around the world.

How Instagram increases depression and suicidal thoughts in teenage

This is another shocking study that finds that teenage girls on Instagram are becoming more depressed and developing eating disorders, based on the content they see on Instagram, which was meant to be fitness videos and better dieting guides, but rather they created a feeling of low self-worth and depression in the young users, as a result of which some even felt more leaned towards suicide because they are not as good enough as the people in those posts from Instagram.

what is extremely sad is that, in spite of knowing this damaging nature of the Instagram posts, the executives chose to keep serving that content, and in fact, they saw greater engagement from those teenagers, because it appears that depressed teenagers seem to get into this feedback loop of where greater depression leads to them using Instagram for longer periods of time.

Built-in addictiveness of online apps

Just one generation ago, we were not carrying supercomputers in our pockets that could instantly communicate with other computers across the globe, all for free. But now, our phones are buzzing all day long and our brains are continuously paying attention to them while ignoring other things. This is not your fault, apps are addictive by design.

A digital detox can help you but only when you really want to help yourself

Just like winning points in a game, every Facebook post and message that catches your attention is giving you a blast of dopamine in your head, and over the span of just hours or days, your brain will automatically learn to pay more attention to sites like Facebook, and guess what? Facebook is specifically wanting you to come into their apps for longer, that’s why you have notifications that are hard to turn off, and annoying features like the double tick “seen” on every message or the “last seen” on messenger, so that you feel more obliged to reply. And you can see how this is harming people around you, of all ages and societies. How can people focus on their important work and studies, when their phones are pinging all day?

Future Social Media: Can Blockchain help?

Facebook is controlled by the executives of Facebook (who are in turn driven by profit-seeking investors). This is the main problem in the above-mentioned situation. Hence, it might be possible that innovative social media that uses decentralization is a potential solution to misinformation and manipulation of social media content. What if ad money was not paying for free social media, and instead there was a different business model?

This idea of decentralization has been growing in recent years. In fact, we are currently using web 2.0, which uses centralized services like Facebook and Google, but a decentralized web (web 3.0) won’t be owned by corporations. Take bitcoin for example, which is the world's most famous and most valued cryptocurrency; it runs without any central authority and belongs to no one individual or entity.

There are definitely problems with bitcoin, such as bitcoin being used for money laundering or illegal activity, but some of the technologies inside bitcoin will change the world. Take the blockchain for example, which is essentially a database or a ledger, that stores information in such a way that it is immutable and can not be tampered with, and so whatever was written in the past can be easily verified using cryptographic hashes. And this type of technology can be used in many situations to verify human trust since blockchain is a general-purpose technology.

Now, how can blockchain change the business model of social media? It is yet to be seen, but take IPFS for example, which has decentralized the monopoly of cloud data storage of companies like Amazon and Google, and has found a way to store and distribute massive amounts of files all over the internet, using data storage farms owned by individuals, not companies. The individuals get paid by a cryptocurrency (file coin), which uses a blockchain, and cryptographic proofing techniques are used to verify that the data is actually being held by the data farmer. Thus, networks like IPFS might just break the centralized control of corporations over the cloud.

Similarly, for preventing the spread of misinformation, Augur is a cryptocurrency that is being used to verify facts in a decentralized and crowdsourced way, so that the truth speakers are rewarded when the information is verified in the long run, and wrong information providers actually lose money, hence creating a new incentive structure, which is unlike the way that Facebook ads fact-checking works currently.

It is still early days for technologies like this Web 3.0, but it will be interesting to see how these new ideas solve the problems of today.

Conclusion

Machine learning is a general-purpose technology, a ML model will only learn what you teach it to, hence certain companies are using ML against the public. While laws like GDPR have safeguarded the public against misuse of big data and machine learning, we still have a long way to go in cases like this Facebook scandal.

This is not the first time Facebook has shown a greater interest in profits while ignoring the social damage it is doing. In fact, in the coming years, many predict that the Facebook family of apps (Facebook, Messenger, Whatsapp, Instagram) will probably be broken up into separate entities by regulators in order to stop the centralized control and the massive abuse of personal data and manipulation across the platforms.

Feel free to let us know your thoughts in the comments, we read every comment!